Database, API Latency, API Performance, Optimization, Monitoring Tools

Ultimate Guide to API Latency and Throughput

Learn how to enhance API performance through metrics like latency and throughput, and discover key strategies for optimization.

by Kevin McGahey • June 25, 2025

Microservices, Database

4 Microservices Examples: Amazon, Netflix, Uber, and Etsy

by Terence Bennett • April 10, 2025

Database

Add a REST API to Your IBM DB2 Database in Four Easy Steps

by Kevin McGahey • November 25, 2024

Software Architecture, Database, API

Multi-Tenant vs. Single-Tenant Systems: Which Is the Optimal Choice?

by Spencer Nguyen • November 18, 2024

Database

DreamFactory DB Functions: Using SQL Functions to Improve Your API Calls

by Nic Davidson • September 4, 2024

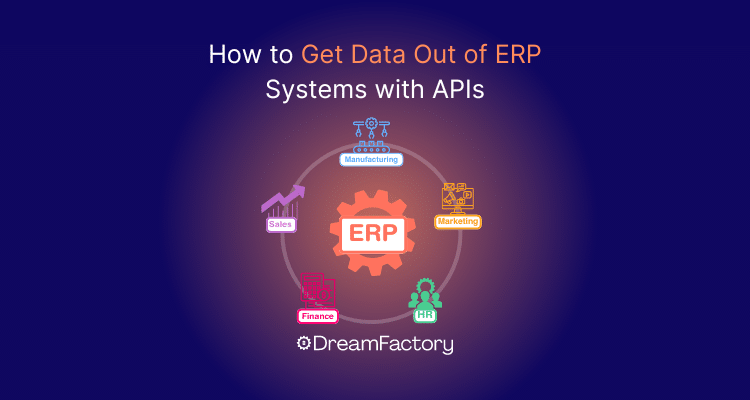

Enterprise Resource Planning (ERP) System, Database, data, API, erp

How to Get Data Out of ERP Systems with APIs

by Spencer Nguyen • May 6, 2024

Database APIs, database management, Database, cybersecurity, API

Cybersecurity Risks of Direct Database Connectors

by Spencer Nguyen • April 13, 2023