4 Microservices Examples: Amazon, Netflix, Uber, and Etsy

by Terence Bennett • April 10, 2025

In this article, we’ll explore the microservices journeys of these wildly successful enterprises. We’ll also examine why microservices have become a cornerstone for modern IT strategies and how they continue to evolve. But first, let’s look at the general circumstances that inspire enterprises to use microservices in the first place.

Key Insights About Microservices:

- Microservices are an approach to building applications consisting of multiple small, independent services.

- Microservices in Java refer to a software architecture pattern where an application is built as a collection of small, independent services.

- Enterprises like Amazon, Netflix, Uber, and Etsy have adopted microservices to enhance scalability, foster innovation, and ensure long-term growth.

- Microservices offer benefits such as agility, pluggability, scalability, and the ability to adapt to changing business requirements.

What Are Microservices?

Quoting James Lewes and Martin Fowler, who played a key role in promoting microservices as an architectural style, is the best way to introduce this concept. Their authoritative article titled "Microservices" defines microservices as an approach to building a single application consisting of multiple small services that operate independently, with each service running in its own process and communicating using lightweight mechanisms like HTTP resource APIs. These services focus on specific business capabilities and are deployable independently using automated tools.

What Are Microservices in Java?

Microservices in Java refers to a software architecture pattern where an application is built as a collection of small, independent, and loosely coupled services that work together to provide complete functionality. Each microservice is a standalone component that can be developed, deployed, and scaled independently, allowing for greater flexibility and scalability in large-scale applications.

In the context of Java, microservices are typically implemented using frameworks and tools that support distributed systems development. Some popular Java-based frameworks for building microservices include Spring Boot, Dropwizard, and Micronaut. These frameworks simplify tasks such as service orchestration, monitoring, and fault tolerance while ensuring high performance.

Here are some key characteristics and principles associated with microservices in Java:

- Autonomy: Each microservice is a self-contained unit with its own dedicated business functionality, database, and communication mechanisms.

- Lightweight Communication: Microservices communicate with each other using lightweight protocols such as REST (Representational State Transfer) or messaging systems like RabbitMQ or Apache Kafka. This allows services to be deployed and scaled independently without tight coupling.

- Scalability and Resilience: Microservices allow for horizontal scaling by replicating individual services across multiple servers to handle increased load. This improves resilience and ensures high availability.

- Polyglot Persistence: Microservices give developers flexibility to choose different types of databases or data storage technologies that best suit each service's requirements.

- Continuous Delivery and DevOps: Microservices promote DevOps practices by enabling teams to independently develop, test, and deploy services using CI/CD pipelines.

When building microservices in Java, it is important to consider aspects such as service discovery, load balancing, fault tolerance, monitoring, security measures like authentication/authorization, and centralized logging.

Why Do Enterprises Adopt Microservices Architecture?

Some of the most innovative and profitable enterprises in the world – like Amazon, Netflix, Uber, and Etsy – attribute their IT initiatives’ enormous success in part to the adoption of microservices. Over time these enterprises dismantled their monolithic applications to create modular systems capable of scaling faster while adapting to market demands.

Most enterprises start by designing their infrastructures as a single monolith or several tightly interdependent monolithic applications. The monolith carries out many functions. All programming for those functions resides in a cohesive piece of application code.

Since the code for these functions is woven into one piece, it’s difficult to untangle. Changing or adding a single feature in a monolith can disrupt the code for the entire application. This makes any type of upgrade—a simple one included—a time-consuming process.

Over time companies cannot make further changes without starting over from scratch. The process rapidly becomes overwhelming and limits innovation while increasing technical debt.

Building a Microservices Architecture

At this point developers may choose to divide a monolith's functionality into small independently running microservices. The microservices loosely connect via APIs to form a microservices-based application architecture. This modular approach enables enterprises to innovate faster while minimizing disruptions during upgrades or scaling efforts.

As you can see there are many benefits to microservices. They are more likely to be used by enterprises that have outgrown monolith systems; however many companies now design their architectures with microservices from the beginning to future-proof their IT infrastructure.

Here are the steps to designing a microservices architecture:

1. Understand the monolith

Study the operation of the monolith and determine the component functions and services it performs. Since all the functions will be mixed together, this may pose a challenge. It is an important part of determining what is needed for the microservices, so it should be the first thing developers focus on.

2. Develop the microservices

Develop each function of the application as an autonomous, independently running microservice. These usually run in a container on a cloud server. Each microservice answers to a single function — search, shipping, payment, accounting, payroll, etc. This allows for minor changes to be made without disrupting the other processes.

3. Integrate the larger application

Loosely integrate the microservices via API gateways, so they work in concert to form the larger application. An iPaaS like DreamFactory can play an essential role in this step. Each microservice will work with the others to provide the necessary functions. Each section can be adjusted, adapted, or even removed without too much impact on the other parts of the application.

4. Allocate system resources

Use container orchestration tools like Kubernetes to manage the allocation of system resources for each microservice. This step helps keep everything organized and ensures the entire system works as a whole.

*Read our complete guide to microservices for more detailed information on this application architecture.

Examples of Microservices in Action

You may be wondering how this all works in practical applications. Let’s look at some examples of microservices in action. The enterprises below used microservices to resolve key scaling and server processing challenges.

1. Amazon

Amazon is known as an Internet retail giant, but it didn’t start that way. In the early 2000s, Amazon’s retail website behaved like a single monolithic application.

The tight connections between — and within — the multi-tiered services that comprised Amazon’s monolith meant that developers had to carefully untangle dependencies every time they wanted to upgrade or scale Amazon’s systems. It was a painstaking process that cost plenty of money and required time to adjust.

Previously, Amazon found that the monolith structure worked very well. However, the code base quickly expanded as more developers joined the team. There were too many updates and projects coming in, which had a negative impact on the software development and function. With such obvious drops in productivity, it was necessary to look at a better way of doing things.

In 2001, development delays, coding challenges, and service interdependencies inhibited Amazon’s ability to meet the scaling requirements of its rapidly growing customer base. The company and its site were exploding, but there was no way to keep up with the growth.

Faced with the need to refactor its system from scratch, Amazon broke its monolithic applications into small, independently running, service-specific applications. The use of microservices immediately changed how the company worked. It was able to change individual features and resources, which made immediate, massive improvements to the site's functionality.

Here’s how Amazon did it:

Developers analyzed the source code and pulled out units of code that served a single, functional purpose. As expected, this was a time-consuming process since all the code was mixed up together, and the various functions of the site were intertwined. However, the developers worked hard to sort it out and determine which sections could be turned into microservices.

Once they had the separate sections of code, the developers wrapped these units in a web service interface. For example, they developed a single service for the Buy button on a product page, a single service for the tax calculator function, and so on. Each function had its own section.

Amazon assigned ownership of each independent service to a team of developers, allowing them to view development bottlenecks more granularly. They could resolve challenges more efficiently since a small number of developers could direct all of their attention to a single service. That one service wouldn’t affect everything else, either, so it was easier to work on.

Amazon’s “service-oriented architecture” was largely the beginning of what we now call microservices. It led to Amazon developing a number of solutions to support microservices architectures — such as Amazon AWS and Apollo — which it currently sells to enterprises throughout the world. Without its transition to microservices, Amazon could not have grown to become the most valuable company in the world — valued by market cap at $1.6 trillion as of August 1, 2022.

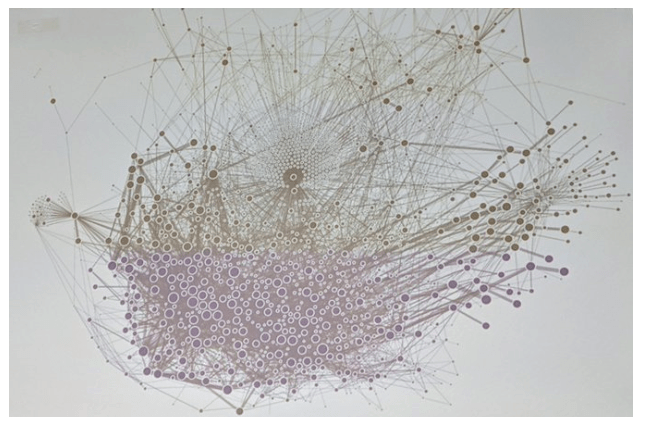

This is a 2008 graphic of Amazon’s microservices infrastructure, aka the Death Star. You can see just how much more efficient it is.

2. Netflix

Amazon wasn’t the only company to pioneer the world of microservices. Netflix is another company that has found success through the use of microservices connected with APIs.

Similar to Amazon, this microservices example began its journey in 2008 before the term "microservices" had come into fashion. Netflix started its movie-streaming service in 2007. By 2008 it was suffering from service outages and scaling challenges; for three days, it was unable to ship DVDs to members.

At this point, the company was still dealing with physical DVDs, which put a damper on how well it could serve its customers. Streaming was still a dream, and online business, while thriving, was difficult. The monolith design was still not very functional beyond a certain point. Microservices architecture was a much better option, but it didn’t truly exist yet.

According to a Netflix vice president:

"Our journey to the cloud at Netflix began in August of 2008, when we experienced a major database corruption and for three days could not ship DVDs to our members. That is when we realized that we had to move away from vertically scaled single points of failure, like relational databases in our datacenter, towards highly reliable, horizontally scalable, distributed systems in the cloud. We chose Amazon Web Services (AWS) as our cloud provider because it provided us with the greatest scale and the broadest set of services and features." (source)

In 2009, Netflix began the gradual process of refactoring its monolithic architecture, service by service, into microservices. The first step was to migrate its non-customer-facing, movie-coding platform to run on Amazon AWS cloud servers as an independent microservice. Netflix spent the following two years converting its customer-facing systems to microservices, finalizing the process in 2012.

The first step was to migrate its non-customer-facing, movie-coding platform to run on Amazon AWS cloud servers as an independent microservice. Netflix spent the following two years converting its customer-facing systems to microservices, finalizing the process in 2012.

Here’s a diagram of Netflix’s gradual transition to microservices:

Refactoring to microservices allowed Netflix to overcome its scaling challenges and service outages. By 2013, Netflix’s API gateway was handling two billion daily API edge requests, managed by over 500 cloud-hosted microservices. By 2017, its architecture consisted of over 700 loosely coupled microservices. Today, Netflix makes around $8 billion a year and streams approximately six billion hours of content weekly to more than 220 million subscribers in 190 countries, and it continues to grow.

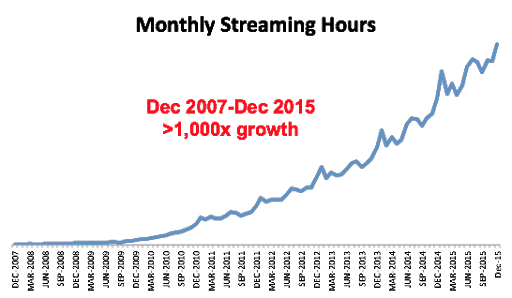

Here’s a visual depiction of Netflix’s growth from 2007 to 2015:

But that’s not all. Netflix received another benefit from microservices: cost reduction. According to the enterprise, its “cloud costs per streaming start ended up being a fraction of those in the data center, a welcome side benefit.” The company has also been responsible for VPN and proxy crackdowns around the world. It stopped users from viewing content through proxy servers, despite the fact the streaming service was available around the world. It is also responsible for changing the way people watch television shows. These days, you have to subscribe to a specific site to receive the shows you want, thanks to Netflix initiating the streaming wars. However, microservices architecture is behind it all.

This is Netflix Senior Engineer Dave Hahn proudly showing off the Netflix microservices architecture:

3. Uber

Despite being introduced to the world more recently than either of our previous examples, Uber also began with a monolith design.

This microservice example came not long after the launch of Uber, when the ride-sharing service encountered growth hurdles related to its monolithic application structure. The platform struggled to develop and launch new features efficiently, fix bugs, and integrate its rapidly growing global operations. Moreover, the complexity of Uber’s monolithic application architecture requires developers to have extensive experience working with the existing system just to make minor updates and changes to the system.

Here’s how Uber’s monolithic structure worked at the time:

- Passengers and drivers connected to Uber’s monolith through a REST API.

- There were three adapters – with embedded API for functions like billing, payment, and text messages.

- There was a MySQL database.

- All features were contained in the monolith.

This design was clunky and difficult to make changes to. For the developers, the ride share’s popularity almost immediately caused problems. The company grew too fast to easily keep up with the app's original design.

Here’s a diagram of Uber’s original monolith from Dzone:

To overcome the challenges of its existing application structure, Uber decided to break the monolith into cloud-based microservices. Subsequently, developers built individual microservices for functions like passenger management, trip management, and more. Similar to the Netflix example above, Uber connected its microservices via an API gateway.

The changes were faster in this case, since it was done earlier in the business. There were fewer functions mixed into the monolith design, and this made it simpler to change things around when it was time to switch.

Here’s a diagram of Uber’s microservices architecture from Dzone:

Moving to this architectural style brought Uber myriad benefits. First, the developers were split into specific teams that had to focus on one service at a time. This ensured they became experts at their particular service. When there was an issue, they could fix it faster and without affecting the other service areas. The speed, quality, and manageability of new development immediately improved.

As Uber began to grow at an exponential speed, fast scaling was made easier. Teams could focus only on the services that needed to scale and leave the rest alone. Thanks to the microservices architecture, everything ran this way smoothly. Updating one service didn’t affect the others. The company also achieved more reliable fault tolerance.

However, there was a problem. Simply refactoring the monolith into microservices wasn’t the end of Uber’s journey. According to Uber’s site reliability engineer, Susan Fowler, the network of microservices needed a clear standardization strategy, or it would be in danger of “spiraling out of control.”

Fowler said that Uber’s first approach to standardization was to create local standards for each microservice. This worked well, in the beginning, to help it get microservices off the ground, but Uber found that the individual microservices couldn’t always trust the availability of other microservices in the architecture due to differences in standards. If developers changed one microservice, they usually had to change the others to prevent service outages. This interfered with scalability because it was impossible to coordinate new standards for all the microservices after a change.

In the end, Uber decided to develop global standards for all microservices. This once again changed everything for the company.

First, they analyzed the principles that resulted in availability — like fault tolerance, documentation, performance, reliability, stability, and scalability. Once they’d identified these, they began to establish quantifiable standards. These were measurable and designed to be followed. For example, the developers could look at business metrics, including webpage views and searches.

Finally, they converted the metrics into requests per second on a microservice. While it wasn’t a rapid change, it was a very necessary one. Uber appeared to be growing on the outside, but there was a real struggle on the inside to keep it in a state of growth without outages and service shortfalls.

According to Fowler, developing and implementing global standards for a microservices architecture like this is a long process; however, for Fowler, it was worth it — because implementing global standards was the final piece of the puzzle that solved Uber scaling difficulties. “It is something you can hand developers, saying, ‘I know you can build amazing services, here’s a system to help you build the best service possible.’ And developers see this and like it,” Fowler said.

Here’s a diagram of Uber’s microservices architecture from 2019:

4. Etsy

Etsy’s transition to a microservices-based infrastructure came after the e-commerce platform started to experience performance issues caused by poor server processing time. The company’s development team set the goal of reducing processing to “1,000-millisecond time-to-glass” (i.e., the amount of time it takes for the screen to update on the user’s device). After that, Etsy decided that concurrent transactions were the only way to boost processing time to achieve this goal. However, the limitations of its PHP-based system made concurrent API calls virtually impossible.

Etsy was stuck in the sluggish world of sequential execution. Not only that, but developers needed to boost the platform’s extensibility for Etsy’s new mobile app features. To solve these challenges, the API team needed to design a new approach — one that kept the API both familiar and accessible for development teams.

Guiding Inspiration

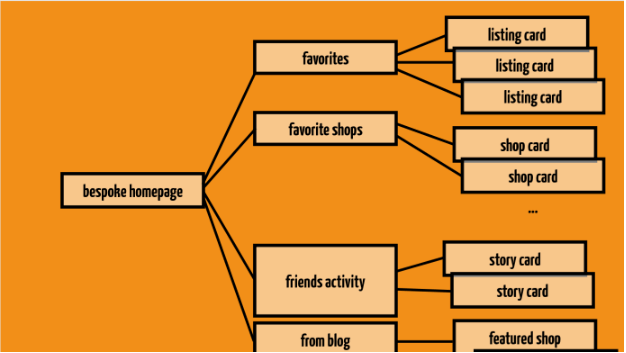

Taking cues from Netflix and other microservices adopters, Etsy implemented a two-layer API with meta-endpoints. Each of the meta-endpoints aggregated additional endpoints. At the risk of getting more technical, InfoQ notes that this strategy enabled “server-side composition of low-level, general-purpose resources into device- or view-specific resources,” which resulted in the following:

- The full stack created a multi-level tree.

- The customer-facing website and mobile app composed themselves into a custom view by consuming a layer of concurrent meta-endpoints.

- The concurrent meta-endpoints call the atomic component endpoints.

- The non-meta-endpoints at the lowest level are the only ones that communicate with the database.

At this point, a lack of concurrency was still limiting Etsy’s processing speed. The meta-endpoints layer simplified and sped up the process of generating a bespoke version of the website and mobile app; however, sequential processing of multiple meta-endpoints still got in the way of meeting Etsy’s performance goals.

Eventually, the engineering team achieved API concurrency by using cURL for parallel HTTP calls. In addition to this, they also created a custom Etsy libcurl patch and developed monitoring tools. These show a request’s call hierarchy as it moves across the network. Further, Etsy also created various developer-friendly tools around the API to make things easier for developers and speed up the adoption of its two-layer API.

Etsy went live with the architectural style in 2016. Since then, the enterprise has benefited from a structure that supports continual innovation, concurrent processing, faster upgrades, and easy scaling. It stands as a successful microservices example.

Here’s a slide depicting Etsy’s multi-level tree from a presentation by Etsy software engineer Stefanie Schirmer:

Benefits of Microservices

Using a microservices approach offers several benefits, including improved scalability, improved scalability, improved application stability, and faster deployment times. Here, we will explore some of the most significant advantages of using microservices and discuss how they can help organizations build more robust and efficient applications.

Easy to Scale:

Since they run independently, developers can focus on scaling only the microservices that need it.

Platform and language agnostic:

Developers are free to program microservices in virtually any language and run them on any platform, then use an iPaaS (Integration Platform as a Service) tool to connect them. So, this allows developers to choose the most appropriate languages and platforms that match the needs of their team.

Improved application stability:

The failure of one is less likely to cause a cascading system failure. Even when microservices invoke other services synchronously, developers can stop failure cascades by implementing features like “circuit breaker” to prevent the calling service from using up system resources while waiting for an unresponsive service.

Better security and compliance:

Microservices only expose the data they need to share. This empowers developers to securely control access to financial, health, accounting, and other private information – which facilitates the process of complying with HIPAA, GDPR, and other standards.

Easier to organize development teams:

When implementing a microservices architecture, managers can break down the development of each microservice into smaller and more manageable tasks that can be handled by smaller teams. This approach allows teams to work independently without waiting for other teams to finish their work, reducing concerns about coding conflicts. Additionally, managers can outsource the development of specific microservices to external vendors or utilize prebuilt SaaS microservices to complete their applications. By leveraging these strategies, organizations can speed up development and reduce the time-to-market for their applications.

Faster, more flexible product development cycles:

The microservices architecture facilitates and speeds up the CI/CD/CD (continuous integration/continuous delivery/ continuous deployment) development cycle. This means that you can launch or deliver an application or individual microservice as soon as it offers use-value, and continue developing the application to roll out additional functions, services, and security updates over time. If the microservice is a cloud-based SaaS, developers can automatically update the application for all users as soon as new versions are available.

Improved business agility:

They give enterprises more agility to experiment with different services and features without incurring significant business risks or exorbitant development costs.

Future-proof:

Development teams can prevent these applications from becoming obsolete by keeping them in a state of constant innovation. They can continually roll out updates without interrupting application performance.

A DevOps-friendly architecture:

Since application tweaks and upgrades happen faster and easier, the microservices model supports a DevOps-friendly philosophy. Users offer feedback and requests, and quickly see their feedback implemented in the next upgrade.

Cons of Microservices

While microservices offer many advantages over traditional monolithic architectures, there are also some potential drawbacks to consider. The use of microservices can increase complexity and overhead, which may lead to more significant operational and maintenance costs. Additionally, managing multiple independent services can be challenging, and communication between them may introduce latency and overhead. These challenges require careful planning and robust tools to mitigate risks and optimize performance.

Connecting and Integrating Can Be Tedious

In a microservices architecture, messaging and APIs are essential for integrating data between individual services. However, manually coding APIs for each microservice can be a time-consuming and expensive task. This process often becomes a bottleneck for teams aiming to scale quickly. Fortunately, there are advanced integration Platform-as-a-Service (iPaaS) solutions, such as DreamFactory, that provide automatic API generation features. These features allow developers to create REST APIs from any database with just a few clicks, significantly reducing the time and effort required for API development. With these tools, integration tasks that previously took weeks can now be completed in minutes, making it easier and faster to connect microservices with each other. By leveraging advanced iPaaS solutions, organizations can save on development costs and accelerate the integration of their microservices.

The Challenge of Refactoring a Monolithic Application

Successfully dismantling and refactoring a monolithic application without affecting its external operation isn’t easy. Developers need to fully understand and identify all of the application’s components. They also need to realize the relationships that exist between them, how they operate, and how users interact with them. A thorough understanding of dependencies is critical for avoiding disruptions during this process. Developers and engineers also need to collaborate closely with users to ensure that any interface changes do not negatively impact application functionality. Without strict adherence to the DevOps or CI/CD philosophy of application development, refactoring to microservices could end in failure.

The Challenge of Deploying All Microservices at Once

Dividing a monolithic application into its component parts and redesigning the application as a cluster of loosely connected microservices means that you’re dealing with a lot of different moving parts. Some of these parts will take longer to finalize than others, making it difficult to deploy all of them at the same time. This challenge is amplified when services are written in different programming languages or deployed on diverse platforms. To address this issue, teams often adopt incremental deployment strategies or use feature flags to manage partially deployed systems.

Latency Challenges Require Constant Monitoring and Tweaking

Development teams need to keep a close eye on latency issues by strategically controlling the distribution of system resources among the different microservices. This requires strategic container orchestration and workload placement, but some teams don’t have the necessary skills for this. To overcome this hurdle, leveraging tools like Kubernetes or service meshes (e.g., Istio) can simplify resource management while improving performance monitoring across services.

Implementing Microservices

Implementing microservices effectively requires a strategic approach to ensure scalability, reliability, and maintainability. Start by using Domain-Driven Design (DDD) to decompose your application into specific business capabilities, defining clear boundaries for each microservice to keep them cohesive and loosely coupled. An API Gateway should be implemented to manage and route requests to the appropriate microservices, centralizing cross-cutting concerns such as authentication, logging, and rate limiting—all of which simplify service management while enhancing security.

For data management, adopt a decentralized approach where each microservice owns its data store. To maintain consistency across services without sacrificing autonomy, consider using patterns like the Saga pattern for distributed transactions. In terms of service communication, choose between synchronous methods like REST or gRPC for straightforward interactions or asynchronous methods such as message queues or Kafka for enhanced resilience and scalability.

Containerization is essential; using Docker ensures consistent environments across all stages, while Kubernetes handles orchestration—allowing for automated scaling, self-healing, and streamlined deployments. Integrating a robust Continuous Integration/Continuous Deployment (CI/CD) pipeline is crucial for automating the building, testing, and deployment of microservices with comprehensive testing at each stage to catch issues early.

Observability should be a priority; integrate logging, monitoring, and tracing to gain visibility into system performance and quickly diagnose issues using tools like the ELK stack (Elasticsearch-Logstash-Kibana), Prometheus, and Jaeger. Security must be enforced at multiple levels: API security with OAuth2 or JWT; service-to-service communication security with mTLS; and encryption of data both in transit and at rest. Regular audits and updates to security policies are necessary to address evolving threats. By adopting these best practices, you can build a microservices architecture that is robust, scalable, and secure.

DreamFactory: Automatic REST API Generation for Rapidly Connecting Your Microservices Architecture

Reading the microservices examples above should help you understand the benefits, processes, and challenges of breaking a monolithic application to build a microservices architecture. However, one thing we haven't addressed is the time and expense of developing custom APIs for connecting the individual microservices that comprise this architectural style. That’s where the DreamFactory iPaaS can help.

Moreover, the DreamFactory iPaaS offers a point-and-click, no-code interface that simplifies the process of developing and exposing APIs to integrate your microservices application architecture. Try DreamFactory for free and start building APIs for microservices today!

Related Articles:

TL;DR - GET AN AI SUMMARY

AI SUMMARY

READY TO BUILD YOUR API?

See how DreamFactory can automatically generate REST APIs for your database in minutes.

Try DreamFactory FreeTerence Bennett, CEO of DreamFactory, has a wealth of experience in government IT systems and Google Cloud. His impressive background includes being a former U.S. Navy Intelligence Officer and a former member of Google's Red Team. Prior to becoming CEO, he served as COO at DreamFactory Software.