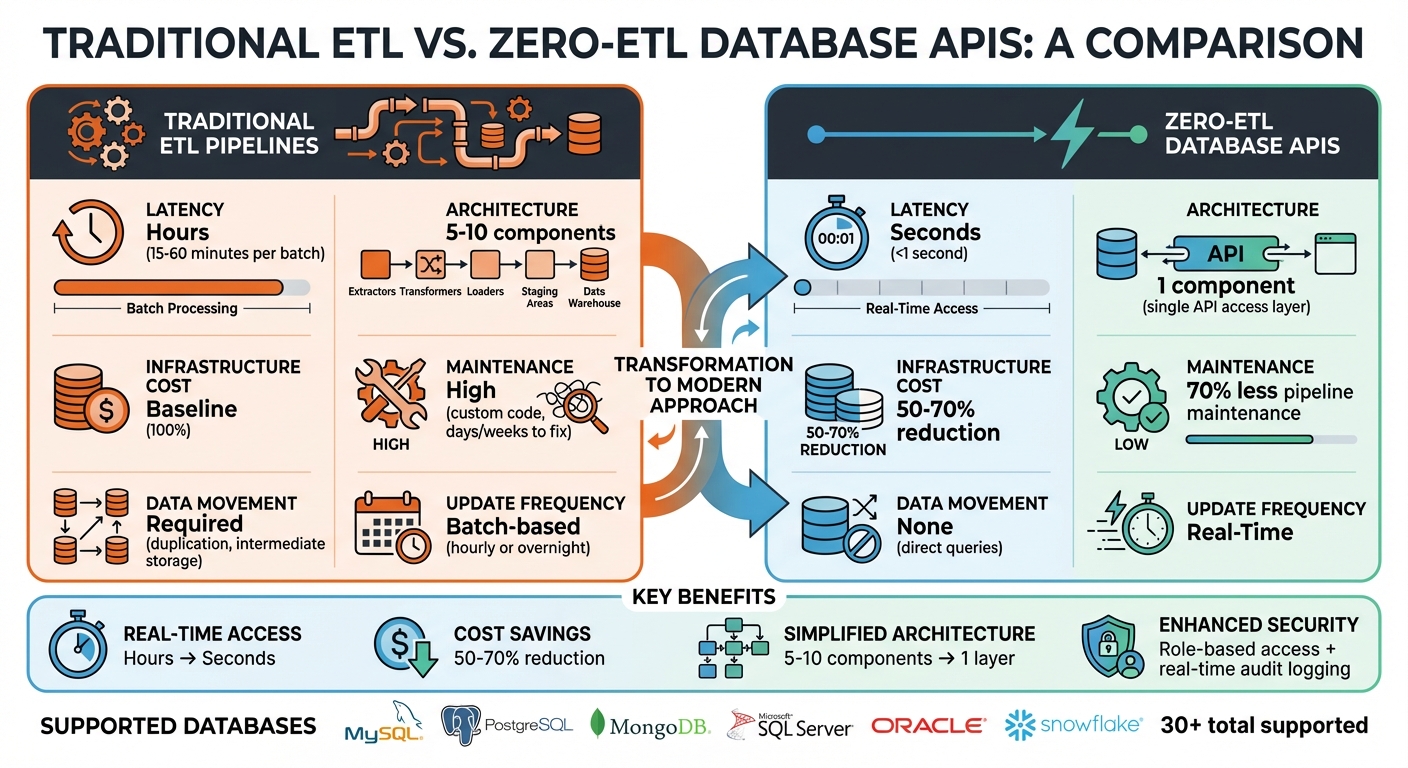

Zero-ETL Database APIs let you access live data instantly without needing traditional ETL processes. Instead of extracting, transforming, and loading data, these APIs query databases directly in real-time, significantly reducing delays that can span hours. Key features include federated querying (accessing multiple data sources simultaneously) and schema-on-read (applying schemas dynamically during queries). This approach enables faster insights, lowers costs, simplifies architecture, and enhances security.

Highlights:

- Real-Time Access: Latency reduced from hours to seconds.

- Cost Savings: Cuts infrastructure expenses by 50%-70%.

- Simplified Architecture: Eliminates complex ETL pipelines.

- Enhanced Security: Role-based access and real-time audit logging.

DreamFactory is a secure, self-hosted enterprise data access platform that provides governed API access to any data source, connecting enterprise applications and on-prem LLMs with role-based access and identity passthrough.This shift supports AI workflows, live dashboards, and legacy modernization, making real-time data more accessible and actionable.

Traditional ETL vs Zero-ETL Database APIs Comparison

Why Enterprises Need Zero-ETL

Problems with Traditional ETL Pipelines

Traditional ETL pipelines often slow down modern enterprises. Their batch-based nature means data updates happen periodically - typically once an hour or even just overnight - leading to delays in analysis and decision-making. As data volumes increase, these delays become even harder to manage.

Another major issue is maintenance. When source schemas change, pipelines break, and data engineers are forced to rewrite custom code and redeploy infrastructure. This process isn’t quick - it can take days or even weeks to resolve. Swami Sivasubramanian, Vice President of AWS Data and Machine Learning, highlights the challenges:

"ETL can be challenging, time-consuming, and costly. It invariably requires data engineers to create custom code... a process that can take days - data analysts can't run interactive analysis or build dashboards, data scientists can't build machine learning (ML) models or run predictions, and end-users can't make data-driven decisions."

Additionally, traditional ETL architectures are highly complex. They involve multiple stages - extraction, transformation, and loading - that rely on staging areas and intermediate storage solutions like S3 buckets. This not only duplicates data but also drives up storage costs and creates governance headaches. These drawbacks underscore the need for a more streamlined approach to handling enterprise data.

How Zero-ETL Enables Real-Time Data Access

Zero-ETL offers a solution by using federated querying to directly access source data, eliminating the need for extensive data movement. With this method, SQL queries can span multiple databases simultaneously without relocating data. This drastically reduces both the complexity and inefficiencies of traditional pipelines.

Benefits of Zero-ETL Database APIs with DreamFactory

Faster Performance and Real-Time Insights

DreamFactory eliminates the delays common with traditional ETL processes. While older ETL methods might take anywhere from 15 to 60 minutes per batch, DreamFactory's direct API queries to live databases deliver results in less than a second. With Change Data Capture (CDC), any updates to the database are immediately captured, ensuring your AI models and dashboards always work with the most current data. This means businesses can act on customer behavior insights instantly, enabling real-time personalization that keeps pace with user needs.

Lower Costs and Simpler Architecture

Real-time insights are just one part of the story - DreamFactory also helps reduce costs and simplify operations. By removing the need for data duplication and expensive transformations, organizations can cut infrastructure costs by 50%-70%. Gone are the days of maintaining intermediate storage, dedicated ETL servers, and multiple data warehouses. Instead, DreamFactory's serverless scaling ensures you only pay for what you use.

From an architecture standpoint, things become much simpler. Rather than juggling 5 to 10 components - like extractors, transformers, loaders, and staging areas - you can streamline everything with a single API access layer. DreamFactory automatically generates APIs for your existing databases and connects SQL and NoSQL sources using CDC and streaming, all without requiring custom code. Engineering teams also benefit, reducing pipeline maintenance by 70%, which frees up time to focus on building new features.

Better Security and Governance

Performance and cost savings are important, but security and governance are equally critical. DreamFactory secures data access by integrating with your existing authentication systems, such as OAuth, LDAP, and SSO. This identity passthrough ensures audit logs reflect actual user identities. Role-based access control (RBAC) protects live queries, preventing unauthorized access to raw databases, while real-time audit logging tracks every API call to meet compliance needs.

This centralized approach significantly reduces vulnerabilities compared to custom-built APIs, as governance policies are enforced directly at the API layer. For industries like finance and healthcare, which require strict compliance, DreamFactory provides the detailed audit trails regulators demand - without the complexity and opacity of traditional batch ETL processes.

Building a Zero-ETL Data Pipeline on AWS

How DreamFactory Enables Zero-ETL Database APIs

DreamFactory simplifies the process of creating database APIs by offering a self-hosted, secure abstraction layer. This allows applications to query live databases directly through REST APIs, eliminating the need to move data. By connecting to existing databases with standard credentials, the platform instantly generates REST APIs, making the process seamless and efficient.

Key Features for Zero-ETL

DreamFactory’s Zero-ETL capabilities revolve around its ability to automatically generate secure REST APIs for over 30 databases, including MySQL, PostgreSQL, MongoDB, SQL Server, and Oracle. Through schema introspection, it identifies tables, collections, functions, and metadata, ensuring the API always reflects the live state of your database.

The platform also supports virtual fields, allowing real-time data transformation. For instance, you can use functions like concat() or date() to format data dynamically without altering the database schema. Want to combine customer first and last names? A virtual field can handle that instantly, eliminating the need for ETL jobs. Additionally, you can inject custom business logic using server-side scripting in JavaScript, Node.js, PHP, or Python.

DreamFactory also ensures database transactions are handled atomically. For example, when performing multi-table inserts, appending rollback=true to the URI prevents orphaned records by rolling back the entire transaction if an error occurs. Developers benefit from auto-generated OpenAPI (Swagger) documentation, which allows them to test endpoints and view live data structures immediately after connecting a database.

These features integrate seamlessly with existing security and identity frameworks, ensuring a secure and flexible API solution.

Integration with Existing Infrastructure

DreamFactory is designed to work effortlessly with your current identity and security systems. It integrates with popular identity providers like LDAP, Active Directory, Okta, OAuth 2.0, OpenID Connect, JWT, and SAML/SSO. This identity passthrough ensures row-level security is maintained, and audit logs display actions by real users instead of generic service accounts.

The platform’s Role-Based Access Control (RBAC) enforces policies at the service, endpoint, and field levels, directly within the API layer as part of a comprehensive API management strategy. DreamFactory also modernizes legacy systems by mounting existing SOAP services and exposing them as RESTful APIs, allowing for upgrades without rewriting code. For monitoring and reporting, it integrates with the ELK stack (Elasticsearch, Logstash, Kibana), providing real-time insights into API activity.

Deployment Options for Any Environment

DreamFactory gives you full control by being customer-hosted, ensuring your data stays within your infrastructure. It supports deployment through Docker and Helm for Kubernetes, enabling rapid scaling and portability across hybrid environments. For more traditional setups, you can install it on bare metal or virtual machines running Linux or Windows.

The platform’s lightweight architecture even allows it to run on devices like the Raspberry Pi, making it ideal for edge computing. Its stateless design means you can easily transfer package files between development and production environments - whether on-premises or in the cloud - using the REST API or Admin Console. This flexibility makes DreamFactory a strong choice for air-gapped or highly regulated environments, such as government agencies or financial institutions handling sensitive data.

|

Deployment Option |

Environment Type |

Technical Requirement |

|---|---|---|

|

Docker |

Cloud, On-premises, Hybrid |

Docker Engine / Desktop |

|

Kubernetes (Helm) |

Cloud, Hybrid |

Kubernetes Cluster |

|

Linux |

On-premises, VM |

Linux Server (Ubuntu, CentOS) |

|

Windows |

On-premises, VM |

Windows Server |

|

Bare Metal |

On-premises, Edge |

Physical Hardware / Raspberry Pi |

How to Implement Zero-ETL with DreamFactory

You can get started with Zero-ETL using DreamFactory in under 15 minutes. DreamFactory simplifies the process by automatically generating APIs that connect directly to live databases, eliminating the need for backend coding or pipeline building.

Step 1: Deploy DreamFactory

First, choose a deployment method that suits your infrastructure needs. For quick prototyping, Docker is the fastest option. Simply run:

docker-compose up -d --build

This will launch a working instance of DreamFactory.

If you're setting up on a traditional server, download the installer from the official site and execute the following command on a Linux server equipped with PHP 8+ and MySQL or PostgreSQL for metadata storage:

curl -s https://installer.dreamfactory.com | bash

For cloud setups, deploying via AWS Marketplace is a great choice. It comes pre-configured with Aurora, streamlining the process and cutting setup time to less than 15 minutes.

Make sure your server has at least 4GB of RAM (8GB is better if you're hosting the system database on the same server). Once installed, access the admin console at https://your-server/admin to complete the setup wizard. Here, you’ll configure your admin user and initial service connections. For highly regulated environments, bare-metal or VM installations are recommended.

Step 2: Connect Data Sources

Head to the Services tab in the admin console, click Create, and choose your database type. DreamFactory supports over 30 connectors, including MySQL, PostgreSQL, SQL Server, MongoDB, Cassandra, Snowflake, and cloud platforms like Salesforce. Enter your connection details (e.g., host, port, database name, credentials) and test the connection. If your database isn’t directly accessible, you can set up an SSH tunnel to secure the connection.

Once you save the connection, DreamFactory performs schema introspection. For example, connecting to a PostgreSQL database automatically generates endpoints like:

GET /api/v2/postgres/_table/customers

These endpoints allow real-time queries without needing ETL. You can test the connection with a simple query:

curl -X GET "https://your-instance/api/v2/postgres/_table/sales" -H "X-DreamFactory-Session-Token: your-token"

This fetches live sales data directly from the database. After confirming the connection works, move on to securing it with identity passthrough.

Step 3: Configure Security and Identity Passthrough

To ensure secure data access, configure identity passthrough so user credentials are passed directly to the backend. In the Roles section, go to Lookup Keys and enable "Passthrough" for your service. Then, map OAuth scopes to database users by integrating with providers like Okta or Auth0. Set passthru_mode: 'header' in the service configuration to ensure each query runs under the user's context. This approach maintains row-level security and audit trails while avoiding centralized sensitive data.

You can also set up rate limiting to prevent abuse. For example, you might allow 1,000 requests per minute per IP or user. Enable additional security features like CORS, IP whitelisting, and API key enforcement. A typical role policy might include:

{"rate_limit": {"requests_per_minute": 500}}

By default, session lifetimes are capped at 60 minutes for security purposes, though this can be adjusted in the .env file.

Step 4: Generate and Customize APIs

Once your data source is connected, DreamFactory automatically generates CRUD endpoints for all tables and views. To customize these APIs, go to the Scripts tab and add server-side logic using V8, Node.js, PHP, or Python. For example, you could transform responses with a script like this:

response.content = filterLiveData(event.response.content)

Or you could handle aggregations directly:

platform().createScript('aggregate_sales', 'php', 'return array_sum($data["amount"]);');

DreamFactory also generates OpenAPI (Swagger) documentation at /api/v2/_swagger, making it easy for developers to test endpoints without writing code. You can enhance functionality with virtual fields, applying database functions like concat() or date() to format data dynamically. For multi-table inserts, append rollback=true to the URI to ensure atomic operations, preventing partial writes if an error occurs. Once customized, these APIs are ready for real-time integration.

Step 5: Enable Real-Time Access for Applications

DreamFactory's APIs can be integrated into dashboards, AI workflows, and applications using REST or GraphQL endpoints. WebSocket support allows for live updates. For example, in tools like Tableau or Microsoft Power BI, you can embed API endpoints with refresh intervals as short as 5 seconds for near-instant updates.

For AI and machine learning, send live data to your models via POST endpoints. Applications requiring continuous updates can use event webhooks for change data capture (CDC)-like streaming. Built-in REST parameters like filter, limit, order, and related help you retrieve only the data you need, reducing payload size and improving performance. This approach ensures real-time insights without the delays of traditional batch ETL processes.

Enterprise Use Cases for Zero-ETL Database APIs

DreamFactory's combination of performance, cost efficiency, and security is driving its adoption in enterprise settings. Companies like Intel and Nike leverage Zero-ETL APIs to gain live data access without the complexity of traditional ETL processes.

Real-Time Dashboards and Analytics

By skipping the delays of batch ETL processes, Zero-ETL APIs enable dashboards to reflect changes in business operations almost instantly. For example, Deloitte used DreamFactory to integrate ERP data from Deltek Costpoint, giving executives real-time access to critical dashboard insights. Similarly, the National Institutes of Health (NIH) connected their SQL databases via DreamFactory APIs, enhancing their Grant Application system without a costly overhaul. This ensured their dashboards remained continuously updated. Tools like Tableau and Power BI can seamlessly consume these APIs, offering near-instant operational visibility. These live updates also provide a solid foundation for advanced AI workflows.

AI and Machine Learning Workflows

Access to live data significantly improves the accuracy and responsiveness of AI models. DreamFactory acts as an on-premises data platform, allowing AI systems to securely access governed data through REST or Model Context Protocol (MCP). Built-in security features, like role-based access control, ensure that AI systems only access the data they are authorized to use. For instance, ExxonMobil utilized DreamFactory to create internal Snowflake REST APIs, solving integration challenges and enabling AI workflows to function on real-time operational data. This adaptability allows businesses to respond quickly to changing conditions.

Legacy System Modernization

DreamFactory simplifies the modernization of legacy systems by transforming outdated SOAP services into REST APIs, eliminating the need for disruptive overhauls. For example, the Vermont Department of Transportation connected systems from the 1970s with modern databases using DreamFactory APIs. Similarly, D.A. Davidson revitalized its investor portal, enabling real-time financial updates. Instead of undertaking a time-consuming and expensive legacy stack replacement, these organizations layered modern API access on top of existing systems. This approach maintains operational continuity while delivering immediate benefits to users and internal teams. Whether for real-time analytics, AI workflows, or system modernization, DreamFactory ensures smooth operations while unlocking new value.

"DreamFactory streamlines everything and makes it easy to concentrate on building your front end application. I had found something that just click, click, click... connect, and you are good to go." - Edo Williams, Lead Software Engineer, Intel

Challenges and Best Practices for Zero-ETL Adoption

Zero-ETL adoption, while promising, requires meticulous planning to tackle challenges related to performance, security, and infrastructure. Below are some effective practices to address these hurdles and ensure a smooth deployment.

Managing Data Volume and Latency

Handling large data volumes and minimizing latency are critical. Techniques like request caching and distributed querying can significantly improve performance. For instance, request caching at the API level stores frequently accessed data, reducing repeated database queries and lowering compute costs. DreamFactory's data mesh architecture takes this further by enabling queries across multiple databases - whether SQL, NoSQL, or others - through a single API call. This eliminates the need for centralizing data in a warehouse while maintaining real-time access to it. Additionally, tools like rate limiting and audit logging can monitor and manage request volumes, ensuring system stability under heavy loads.

Maintaining Security in Direct Data Access

Direct access to databases introduces potential security risks, but these can be mitigated with robust measures. DreamFactory employs parameterized queries, which safeguard against SQL injection by securely parsing query strings rather than embedding raw inputs. Master credentials are encrypted and kept server-side, ensuring they remain inaccessible to clients. Moreover, Role-Based Access Control (RBAC) allows for granular permissions, restricting access to specific tables, columns, or records based on user roles. Kevin McGahey, Solutions Engineer at DreamFactory, emphasizes this approach:

"Security starts with architecture. Treat your AI like an untrusted actor - and give it safe, supervised access through a controlled API, not a login prompt".

Working with Hybrid and Multi-Cloud Environments

Many enterprises operate in complex environments that combine on-premises data centers with private and public clouds. DreamFactory provides flexibility in such setups by supporting deployment through Docker, Helm/Kubernetes, and native installers for Linux or Windows. This allows organizations to securely connect legacy on-premises databases with modern cloud applications without requiring full migrations. Features like Cross-Origin Resource Sharing (CORS) prevent unauthorized domain access to APIs, while SSL/TLS encryption secures all network communications. Additionally, the platform's service abstraction layer simplifies transitions between development, testing, and production environments without requiring changes to client-side code.

Conclusion: The Future of Data Access with Zero-ETL

Zero-ETL is reshaping how enterprises access and utilize their data, moving far beyond just a technical upgrade. By eliminating traditional ETL pipelines, these systems now deliver insights in less than 10 seconds. DreamFactory plays a key role in this shift, simplifying backend complexities and removing the need for extensive data movement. As Swami Sivasubramanian, Vice President of AWS Data and Machine Learning, aptly put it:

"AWS is investing in a zero-ETL future so that builders can focus more on creating value from data, instead of preparing data for analysis".

This shift highlights a larger trend in the industry: making data integration seamless. The integration of HTAP (Hybrid Transactional/Analytical Processing) systems - where transactional and analytical processes coexist - and decentralized data mesh architectures that allow querying across diverse data sources are paving the way for the future of enterprise data infrastructure. With over 20,000 publicly accessible APIs supporting these evolving strategies, the API management market is expected to surpass $13 billion by 2027.

DreamFactory’s unified interface offers a real solution for enterprises navigating hybrid and multi-cloud environments. Whether modernizing systems from the 1970s or enabling AI agents to securely access real-time data, these advancements are setting the stage for more agile, responsive businesses.

With its focus on performance, cost savings, and enhanced security, Zero-ETL is not just an emerging trend - it’s becoming a necessity. The real question isn’t whether Zero-ETL will dominate, but how quickly your organization can adopt it to stay ahead in a world where real-time insights are the key to success.

FAQs

How does Zero-ETL enhance data security compared to traditional ETL processes?

Zero-ETL improves data security by removing the need to transfer or duplicate data between systems. Since data doesn’t travel, the chances of breaches during transit or leaks from duplicated datasets drop significantly.

This approach also reduces vulnerabilities by keeping data in its original environment, cutting down on risks tied to external pipelines or third-party processing. By doing so, businesses can maintain stricter control over sensitive information while simplifying how data is accessed.

What are the main advantages of using DreamFactory for implementing Zero-ETL solutions?

DreamFactory makes implementing Zero-ETL a breeze by automatically creating secure, fully documented REST APIs for your data sources. This approach removes the need for manual backend coding and the hassle of traditional ETL pipelines, offering real-time access to live data with lower latency and less operational complexity.

With features like role-based access control, OAuth authentication, and detailed logging, DreamFactory prioritizes security and governance for your APIs. This means you can share live data seamlessly across platforms - whether it's mobile apps, web portals, or AI systems - without the risks or delays tied to data replication. By upgrading your data infrastructure, DreamFactory enables quicker, more efficient decision-making with secure, instant access to the information that matters most.

What is federated querying in a Zero-ETL environment, and how does it work?

Federated querying in a Zero-ETL setup offers a way to access and analyze data stored in multiple external databases without physically moving or replicating it. Instead of consolidating data into a central repository, this method connects directly to external sources and runs SQL queries on them. The results are then translated into a format that integrates smoothly with the system.

This approach removes the delays and complexities associated with traditional ETL pipelines. By accessing data directly at its source, organizations can work with the most current information, enabling quicker, more informed decisions. It also minimizes maintenance efforts and helps maintain data accuracy.

Kevin Hood is an accomplished solutions engineer specializing in data analytics and AI, enterprise data governance, data integration, and API-led initiatives.

Blog

Blog