Policy-driven APIs are reshaping how AI interacts with enterprise data by embedding security and governance directly into the API layer. These APIs address critical challenges like data breaches, compliance, and scalability by enforcing strict rules for authentication, authorization, and data filtering. Here’s what you need to know:

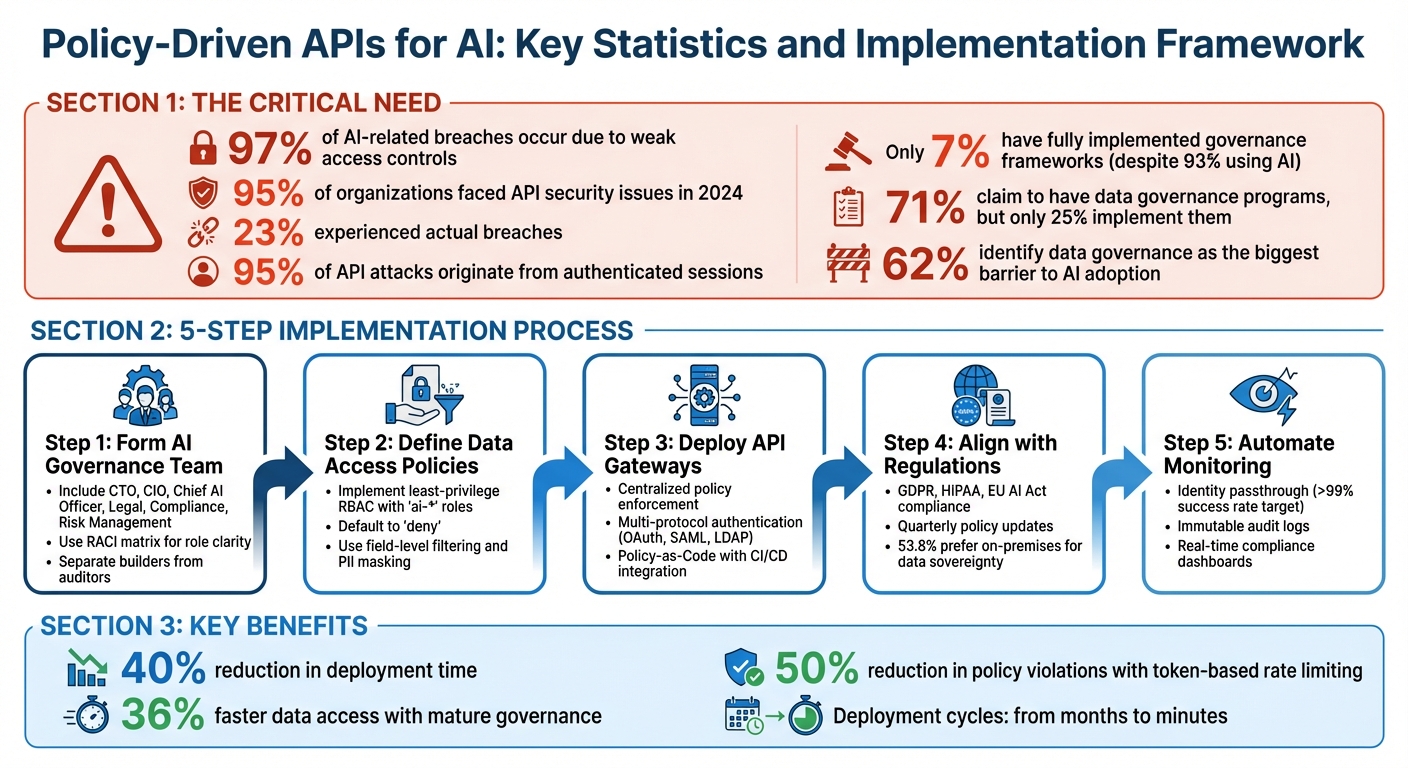

- Why They Matter: 97% of AI-related breaches occur due to weak access controls. Policy-driven APIs mitigate this by treating every AI request as untrusted, ensuring robust security and compliance.

- Key Features:

- Zero-Trust Security: Every query is authenticated and authorized.

- Identity Passthrough: Tracks user-specific actions for audit trails.

- Data Filtering: Masks sensitive fields and enforces least-privilege access.

- Automated Enforcement: Policies evolve with AI workloads, reducing manual overhead.

- Implementation Steps:

Policy-Driven APIs for AI: Key Statistics and Implementation Framework

IGT-AI: A Framework for AI-API Governance with AI Gateways

Building Governance Frameworks

Before rolling out policy-driven APIs, it's crucial to have a governance framework in place. This framework should clearly outline who makes decisions, how approvals work, and how exceptions are handled. Interestingly, while 71% of organizations claim to have data governance programs, only 25% actually put them into practice. Even fewer - just 28% - have enterprise-wide oversight for AI governance roles and responsibilities.

Form a Governance Team

Start by forming a formal AI governance council that includes key leaders like your CTO, CIO, Chief AI Officer (if applicable), and representatives from legal, compliance, risk management, and business units. This team should operate continuously, using the Three Lines of Defense model. The council's role is to provide strategic direction and approve use cases, while the operational layer manages day-to-day tasks through technical experts and managers.

Assign clear roles, such as Data Stewards, Model Owners, and Governance Champions, ensuring responsibilities don’t overlap. A RACI matrix (Responsible, Accountable, Consulted, and Informed) can help clarify roles as your AI initiatives grow. It’s critical to separate duties - the team building the AI system should not be the same team auditing it to avoid conflicts of interest. Regular reviews of API control mechanisms are essential to keep up with changing technical and regulatory requirements. Additionally, document a charter that outlines both the intended uses and prohibited applications of the AI system to avoid scope creep.

Once your team is set, the next step is to establish clear data access policies that align with your governance framework.

Define AI Data Access Policies

Your policies should enforce explicit authentication and context-aware authorization for every AI interaction. Implement a least-privilege Role-Based Access Control (RBAC) system with "ai-*" roles that default to "deny", granting access only to specific endpoints, fields, or rows required for the task. Without strong access controls, organizations are at a much higher risk of breaches.

"Replace 'AI writes SQL' with 'AI calls vetted endpoints.' Parameterization and role policies neutralize injection risk while preserving speed." – Kevin McGahey, Product Lead

To minimize data exposure, use field-level filtering, PII masking, and pseudonymization, adhering to standards like GDPR and HIPAA. AI systems should never write raw SQL; instead, they should interact with pre-approved, parameterized REST endpoints to eliminate injection risks. Categorize AI applications by risk level - Low, Medium, or High - and adjust governance measures accordingly. For high-stakes scenarios, such as in healthcare or finance, introduce human-in-the-loop checkpoints where AI outputs are verified before any action is taken.

Once access policies are in place, ensure they align with emerging regulatory standards to maintain compliance.

Align with Regulatory Requirements

Governance frameworks must stay flexible to meet evolving compliance standards like GDPR, HIPAA, and the EU AI Act. Treat your AI policies as living documents, updating them quarterly to reflect new regulations. Use a centralized-federated approach: a central team sets overarching standards, while domain-specific teams implement them locally.

Enable identity passthrough in your API layer to create complete audit trails, showing exactly which user initiated each AI action. Maintain immutable logs of every AI interaction, capturing details like identity, parameters, and decision logic, which simplifies compliance reporting. Require standardized documentation, such as "System Summaries" and "Evaluation Records", for every AI project to ensure auditability for regulators. Notably, on-premises deployment models dominate the AI governance market (53.8% share) because industries like healthcare, finance, and government often require self-hosted solutions to maintain data sovereignty.

Implementing Secure API Access Controls

To maintain robust security, ensure that AI data access is governed by strict authentication, authorization, and validation processes for every request. A zero-trust approach prevents AI agents from exceeding their permissions or bypassing safeguards. Here's a closer look at how to secure authentication and manage data access effectively.

Authentication and Authorization

A solid governance framework begins with precise user authentication and role-based controls. For a deeper dive into enterprise-grade protection, consult a comprehensive API security guide. One key practice is identity passthrough, which propagates the authenticated user's identity through the API layer. This ensures that database audit logs reflect the actions of individual users rather than a generic service account. By doing so, AI agents operate strictly within the entitlements of each user, creating accurate records of who initiated specific actions.

Standard identity protocols like OAuth 2.0, SAML, OIDC, LDAP, and Active Directory are essential for secure operations. These systems enable the use of short-lived, scoped tokens. For example, JSON Web Tokens (JWTs) can carry user identity and roles via headers like X-DreamFactory-Session-Token. This allows APIs to enforce permissions at different levels:

- Service/endpoint level: Grant or deny access to entire APIs.

- Field-level security: Restrict access to sensitive data, such as salary columns for non-HR roles.

- Row-level security: Filter query results so that users, like sales reps, see only their own accounts.

"Treat AI like any external, untrusted client. Every action must be authenticated, authorized, validated, monitored, and logged." – Kevin McGahey, Solutions Engineer and Product Lead, DreamFactory

Role-Based Access Control (RBAC) is another critical layer of security. It converts user identities into enforceable permissions. For instance, create specific ai-* roles with a default deny setting, granting access only to necessary endpoints. In industries like finance or healthcare, fine-grained scopes offer additional security, enforcing the principle of least privilege. However, these require more detailed planning compared to broader, coarse-grained scopes.

| Mechanism | Description | AI System Benefit |

|---|---|---|

| OAuth 2.0 | Delegated authorization standard | Enables AI agents to use short-lived, scoped tokens |

| SAML / SSO | Single Sign-On integration | Maps IdP attributes (e.g., groups) to API roles |

| Identity Passthrough | User identity propagation | Ensures accurate database audit logs |

| mTLS | Mutual Transport Layer Security | Secures service-to-service communication |

Data Validation and Encryption

Securing data integrity and privacy is just as important as managing user identities. Parameterized endpoints are a critical safeguard against SQL injection attacks. Instead of allowing AI agents to execute raw SQL queries, require them to interact with pre-approved REST endpoints that validate all parameters. This ensures that AI-generated inputs conform to expected schemas, types, and ranges, blocking malformed or harmful data.

Encryption is another cornerstone of secure API access. Protect data at rest and in transit by encrypting sensitive information, including indices, caches, AI transcripts, and outputs. Store API keys, connection strings, and signing keys in secure vaults or Hardware Security Modules (HSMs) - never embed them in code or expose them in AI prompts. Use mutual TLS (mTLS) to secure communication between services.

Additional measures like field-level filtering, PII masking, and pseudonymization further safeguard sensitive data. These controls ensure that AI agents only access the minimal data necessary for their tasks, reducing risks if an agent is compromised or misconfigured.

Rate Limiting and Traffic Management

AI APIs must carefully monitor both Requests per Minute (RPM) and Tokens per Minute (TPM), as token usage can vary significantly based on context size. Misconfigured clients can lead to staggering expenses - one example saw costs of up to $15,000 in just 48 hours. To mitigate risks, enforce multi-level rate limits (e.g., by instance, user, role, service, and endpoint) and implement sub-second monitoring. Use exponential backoff with jitter for handling 429 Too Many Requests errors, and set alert thresholds at 80%, 90%, and 100% capacity to prevent cost overruns.

For high-traffic environments, replace file-based caching with Redis, which can manage rate limit counters across distributed systems.

To help AI clients adjust their request rates programmatically, include rate limit headers in API responses, such as X-RateLimit-Limit, X-RateLimit-Remaining, and Retry-After. Additionally, integrate abuse detection mechanisms to block harmful requests, such as those containing jailbreak or prompt injection patterns like "ignore previous instructions", which can drain resources unnecessarily.

Automating Policy Enforcement

Handling thousands of AI requests per minute manually is simply impractical. Automation ensures security rules are applied consistently across all endpoints and environments, eliminating the need for constant human intervention. By treating policies as code and integrating them into your development process, you can avoid configuration drift and reduce human error.

Deploy API Gateways for Centralized Enforcement

API gateways act as the central control point for enforcing policies, ensuring all teams follow a unified standard. Instead of separately configuring authentication, rate limiting, and data validation for each microservice, the gateway applies these controls uniformly. This not only reduces manual effort but also ensures that every AI request - whether it’s internal or external - undergoes the same level of scrutiny.

Modern gateways support Policy-as-Code, which allows for versioning, testing, and deployment of policies through CI/CD pipelines. With this setup, you can test policy configurations before they go live. For instance, you can verify that new rate limits don’t block legitimate AI traffic or that field-level filtering effectively masks sensitive information like personally identifiable information (PII).

"The fastest secure path is to reduce custom glue code. A hardened API or orchestration layer lets you connect AI to enterprise data/workflows without rebuilding connectors or re-implementing security each time." – Kevin McGahey, Product Lead, DreamFactory

Gateways also simplify multi-protocol authentication (e.g., OAuth, SAML, LDAP) and fine-grained Role-Based Access Control (RBAC) through a single interface. This eliminates the need for separate authentication setups for each backend system. Additionally, you can implement circuit breakers to prevent overloads by automatically triggering when error or failure thresholds are reached during traffic spikes.

Integrate Policies Into CI/CD Pipelines

While API gateways centralize enforcement, embedding policy checks into your CI/CD pipelines ensures compliance at every step. Every code update undergoes security and compliance validation before deployment. This GitOps approach treats API policies as version-controlled artifacts, creating an audit trail that tracks who made changes and when. Automated testing can catch issues like overly permissive access rules or missing encryption settings before they hit production.

By automating policy enforcement through CI/CD pipelines, you reduce manual configuration errors and avoid delays caused by late-stage security fixes. This also improves key metrics like Lead Time for Change and Change Failure Rate. Your pipeline should verify endpoint configurations and ensure there are no direct database access paths.

After deployment, continuous runtime monitoring ensures that these policies remain effective and up to date.

Monitor and Govern Runtime API Usage

Even after deployment, runtime monitoring is essential for detecting anomalies, identifying undocumented (Shadow) or deprecated (Zombie) APIs, and enforcing governance. In 2024, 95% of organizations faced security issues with production APIs, with 23% experiencing breaches. This highlights the importance of ongoing monitoring beyond pre-deployment checks.

Use standardized observability tools like OpenTelemetry to collect metrics, logs, and traces. This approach avoids vendor lock-in and ensures consistent troubleshooting across systems. Set proactive alerts for request rates, error rates, and latency to address issues before they escalate into outages or security breaches.

Shadow and Zombie APIs are particularly risky because they bypass governance controls. Edge technologies can automatically detect these APIs, allowing you to either manage or shut them down. For public-facing APIs, a Web Application Firewall (WAF) helps block malicious requests using threat signatures and behavioral analysis.

To protect sensitive data, apply data minimization strategies like automated field-level and row-level filtering. These measures ensure AI models only access the data they need for specific tasks. Runtime monitoring further verifies that these filters are functioning correctly and that no sensitive information leaks through misconfigured policies.

Ensuring Transparency and Auditability

Automation may enforce policies, but transparency is what proves those policies are effective. Without detailed logs and clear reporting, it becomes nearly impossible to demonstrate compliance or trace security incidents. In fact, by 2025, 84% of organizations reported experiencing API-related security incidents, yet many lacked the audit trails necessary to understand what went wrong. Having complete visibility - knowing who accessed what data, when, and under which policies - turns your governance framework from a mysterious "black box" into an accountable system. This level of auditability complements automated enforcement and strengthens the governance framework you've established.

Enable Identity Passthrough for Real User Tracking

Generic service accounts like "ai_service" or "api_gateway" obscure the actual users behind AI requests, making compliance audits and forensic investigations extremely challenging. Identity passthrough solves this by preserving each user's identity throughout the API call chain. When audit logs capture specific individuals rather than shared credentials, it becomes much easier to pinpoint who accessed sensitive customer records or financial data during compliance reviews.

This approach is critical because a staggering 95% of API attacks originate from authenticated sessions. A compromised service account with broad permissions could jeopardize your entire database. In contrast, identity passthrough limits the impact of a breach to only what that specific user is authorized to access. Plus, integrating this feature with existing authentication systems like OAuth, LDAP, or SSO is straightforward. The API gateway simply forwards the user's JWT or SAML token to backend systems. This practice aligns with a zero-trust security model, ensuring every action is directly attributable to an individual user.

"The identity of the user asking a question should determine what data the AI can access to answer it... Identity passthrough is the foundation for trustworthy enterprise AI." – Nic Davidson, DreamFactory

Maintain Complete Audit Trails

Complete audit trails are essential for verifying every action taken within your system. Logs should capture user identity, ISO 8601 timestamps (e.g., 2026-03-29T01:00:00Z), API endpoints, request parameters, response statuses, and policy action details. For AI-specific use cases, logs should also include token budgets consumed and any content filters applied to meet new regulatory requirements.

Using structured formats like JSON ensures logs are consistent and easy to search or analyze. Tools like Jaeger can provide distributed tracing, helping you track data lineage from its origin through transformations to the final AI model. Storing these logs in an append-only system prevents tampering, offering tamper-proof evidence for compliance reviews.

Create Compliance Dashboards

Building on comprehensive audit logs, compliance dashboards offer a clear, real-time view of how well your governance framework is functioning. Dashboards can display key metrics like audit trail coverage, identity passthrough success rates (aiming for >99%), policy enforcement latency, unauthorized access attempts per day, and token consumption per user. Alerts can highlight quota usage and compliance issues, giving immediate insight into rate limits and policy adherence.

Tools like Grafana are excellent for creating these dashboards. They allow you to filter data by user identity, making it easy to see which teams or individuals are driving API usage. Monitoring trends like 429 errors can help identify potential brute-force attacks, while tracking authentication events can reveal unauthorized access attempts. To protect privacy, ensure sensitive data like PII, API keys, and account numbers are masked or stripped from the dashboard. These visualizations turn raw audit data into actionable insights, demonstrating that your governance framework is operating as intended.

Overcoming Common Implementation Challenges

Even with transparency and audit trails in place, rolling out policy-driven APIs for AI isn't without hurdles. Organizations often find themselves caught between maintaining strict governance and keeping up development speed. Add to that the challenge of managing policies across fragmented infrastructures and keeping pace with evolving regulations, and it's clear why 62% of organizations identify data governance as the biggest barrier to AI adoption. These challenges, however, can be tackled effectively by embedding governance into everyday workflows. Below, we’ll explore how to strike a balance between governance and speed, centralize control in complex environments, and stay agile in the face of regulatory changes.

Balancing Governance and Development Speed

Governance is critical, but when it’s overly rigid or generic, it can bog down development and drive teams toward shadow IT. The key is to automate governance in a way that developers don't feel hindered. One effective approach is policy-as-code, where API access rules are defined in version-controlled files using tools like Open Policy Agent or JSON schemas. By integrating these into CI/CD pipelines, organizations can automate validations and significantly speed up development. For example, companies using this method have reported shrinking deployment cycles from months to minutes, with some achieving deployment time reductions of up to 40%.

Self-service developer portals are another game-changer. These portals allow developers to request API keys or use pre-approved endpoint templates without waiting for admin approval, reducing onboarding delays. Pair this with progressive governance, where lower-risk APIs undergo lighter reviews compared to those dealing with sensitive data, and you’ve got a system that keeps teams agile while safeguarding critical assets.

"Speed and safety are no longer tradeoffs when the platform embeds governance into every request path" – Terence Bennett, CEO, DreamFactory

Centralizing Governance in Distributed Environments

In hybrid and multi-cloud setups, managing policies can feel like trying to herd cats. Visibility gaps and inconsistent enforcement are common issues. A centralized API gateway can act as a single trust layer across all environments - whether on-premises, AWS, Azure, private clouds, or edge setups. By consolidating scattered API policies into federated dashboards, organizations gain unified visibility and control.

This gateway should also support unified policy engines with identity passthrough (OAuth, LDAP, SSO), ensuring user credentials flow seamlessly to backend systems regardless of their hosting environment. AI tools can further simplify this process by analyzing API usage patterns and suggesting reusable policy templates. For example, AI-driven platforms have analyzed APIs across AWS, Azure, and on-premises systems to auto-generate deployable policy templates, cutting down on manual reviews and providing real-time insights. The result? Consistent enforcement with less complexity.

Adapting to Changing Regulations

Regulations like GDPR, HIPAA, and new AI-specific laws are constantly evolving, and keeping up can feel overwhelming. That’s where modular policy designs come into play. By creating pluggable rule sets tailored for regional compliance - such as EU data residency or California privacy laws - you can update policies without overhauling your entire governance framework. Regular, agile reviews (e.g., quarterly) help incorporate new compliance signals, like unexpected spikes in data movement.

From the start, build request-level authorization with content-based restrictions and token budgets to handle shifting regulations effectively. Dashboards that flag compliance signals, such as unusual access patterns or data egress spikes, allow teams to respond quickly and avoid violations. Token-based rate limiting has proven especially effective, reducing policy violations by 50% compared to simple request-count limits in scaled AI environments. Treating compliance as an ongoing process, rather than a one-time task, ensures organizations stay ahead of regulatory changes without scrambling to catch up later.

Conclusion and Key Takeaways

Recap of Best Practices

Policy-driven APIs are essential for ensuring secure AI data access. A staggering 97% of organizations experiencing AI-related breaches lacked proper access controls, while only 7% have fully implemented governance frameworks, even though 93% are actively using AI. The practices outlined here - like forming governance teams, adopting zero-trust authentication, automating policy enforcement, and maintaining comprehensive audit trails - directly address these vulnerabilities. Together, they enhance security and regulatory compliance while supporting your broader governance strategy.

One critical recommendation is to avoid direct SQL queries and instead rely on vetted, parameterized REST endpoints for AI agents. Additionally, using identity passthrough ensures AI respects user-specific entitlements rather than operating under overly broad service accounts. Every action must be authenticated, authorized, validated, monitored, and logged.

The Role of Tools Like DreamFactory

DreamFactory offers a robust solution, acting as a secure abstraction layer between AI and your databases. Instead of allowing AI to directly query your database, it routes queries through governed APIs that include features like identity passthrough, role-based access control (RBAC), field-level masking, and audit logging. This tool integrates seamlessly with existing security systems such as OAuth, LDAP, and SSO, and supports deployment options like on-premises, private cloud, edge, or hybrid environments - ensuring your data stays within your infrastructure.

Kevin McGahey, Solutions Engineer and Product Lead at DreamFactory, emphasizes this point:

"Don't let LLMs write SQL. Put a secure API gateway between AI and your databases. Enforce zero-trust, parameterization, RBAC, masking, and full-fidelity audit logs".

With over 30 connectors - including Snowflake, Databricks, Oracle, SQL Server, and MongoDB - and a built-in MCP server for AI agents, DreamFactory simplifies API development. It transforms what used to be manual coding into a policy-driven configuration process. This secure foundation enables enterprises to confidently advance their AI governance initiatives.

Next Steps for Enterprises

Start by evaluating your current API governance maturity. Ask yourself:

- Do you have specific policies for AI data access?

- Are you relying on service accounts, or have you implemented identity passthrough?

- Can you audit every AI-driven query?

A good starting point is a pilot project: define a clear AI use case, identify the necessary data, create secure APIs with proper RBAC, configure policies, and deploy behind a gateway. Use short-lived tokens for AI agents and enforce a least-privilege access model that denies everything by default. Organizations with mature governance frameworks can achieve data access up to 36% faster by establishing secure, well-documented pathways.

In this new AI-driven world, success hinges not on the size of your models but on how safely and consistently you can expose data to AI agents.

FAQs

What makes an API “policy-driven” for AI?

A policy-driven API for AI is designed to enforce governance, access controls, and security policies. By regulating data access, it ensures that AI systems interact with data strictly according to predefined rules and permissions. This setup protects sensitive information and helps maintain compliance with an organization's internal policies.

How does identity passthrough work in an AI API?

Identity passthrough ensures that an authenticated user's identity travels through every layer of a request. This allows the AI system to apply role-based access control (RBAC) policies, which limit data access strictly to authorized users based on their identity. By doing so, it offers a secure method for managing permissions while minimizing unnecessary exposure of sensitive information.

What’s the fastest way to pilot policy-driven AI data access safely?

The fastest way to securely test policy-driven AI data access is by using a managed platform with built-in security features like identity passthrough and role-based access control (RBAC). These tools help ensure that AI agents only access data they're authorized to, keeping everything compliant with regulations.

For instance, a platform like DreamFactory streamlines this process by offering governed API endpoints, integrating with existing authentication systems, and supporting secure protocols. This makes it possible to test quickly and safely without compromising security.

Related Blog Posts

Kevin Hood is an accomplished solutions engineer specializing in data analytics and AI, enterprise data governance, data integration, and API-led initiatives.

Blog

Blog