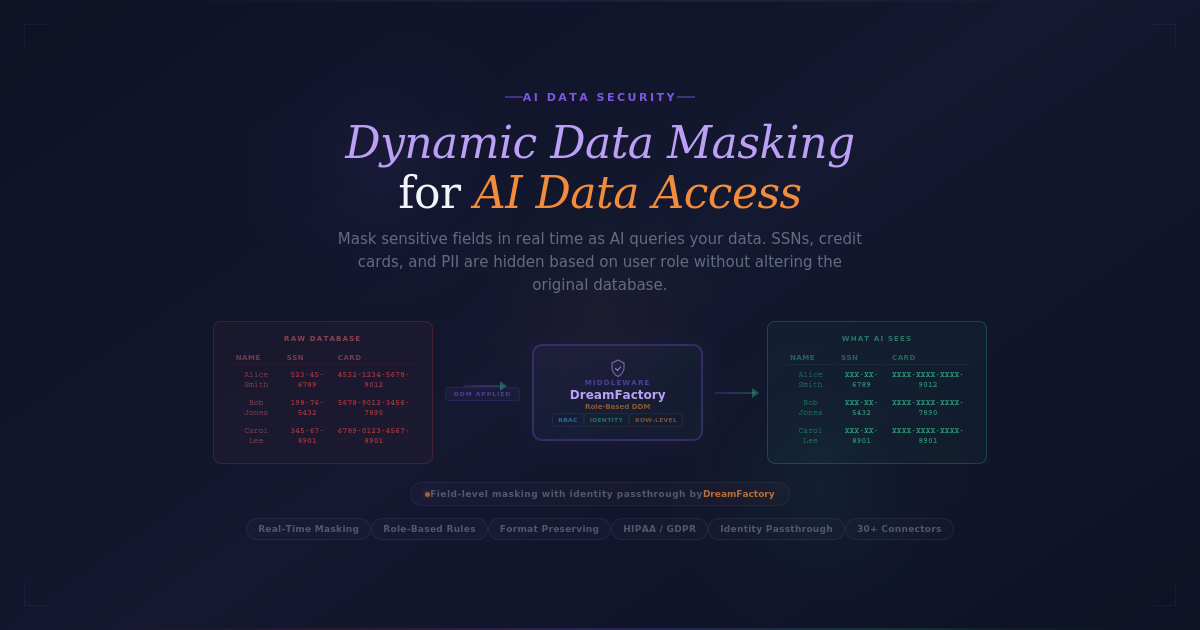

Dynamic Data Masking (DDM) is a real-time solution to protect sensitive information when AI systems access enterprise data. It intercepts database queries and applies masking rules based on user roles, ensuring sensitive fields like Social Security numbers or credit card details are hidden without altering the original data. This approach prevents accidental exposure, ensures compliance with regulations like HIPAA and GDPR, and safeguards against attacks like prompt injection (successful 91% of the time). Unlike static masking, which permanently modifies data, DDM dynamically applies rules during query runtime, maintaining data integrity for AI analysis. DreamFactory is a secure, self-hosted enterprise data access platform that provides governed API access to any data source, connecting enterprise applications and on-prem LLMs with role-based access and identity passthrough.

Key Takeaways:

- Real-Time Protection: Sensitive data is masked dynamically at query runtime.

- Compliance-Friendly: DDM supports audit trails by linking AI queries to user credentials.

- Role-Based Rules: Masking adapts to user roles and permissions.

- Maintains Data Structure: Ensures AI systems can analyze data relationships without exposing sensitive details.

- Implementation Steps: Identify sensitive data, define masking policies, use middleware, integrate identity systems, and monitor performance.

By combining DDM with tools like API gateways and identity passthrough, enterprises can secure live AI interactions while meeting regulatory demands.

Getting started with Dynamic Data Masking (DDM) in Amazon Redshift

How Dynamic Data Masking Protects AI Data Access

Dynamic Data Masking (DDM) adds an extra layer of protection by intercepting queries in real time and applying masking rules to sensitive data before it reaches the AI system. This process happens at the API gateway or middleware level, where sensitive fields are redacted based on the requestor's authorization. Importantly, the original data in the database stays untouched - only the query results are affected. This dynamic approach is essential for protecting sensitive information when AI systems handle live queries.

Real-Time Anonymization During AI Queries

DDM not only shields sensitive data but also helps meet regulatory requirements. When an AI agent queries a database, DDM identifies fields like Social Security numbers, credit card details, or medical records and replaces them with masked values on the fly. For instance, a customer service AI might see a credit card number as "XXXX-XXXX-XXXX-4532" instead of the full number. This field-level masking ensures that sensitive information doesn’t appear in AI logs, training datasets, or cached outputs.

By implementing identity passthrough, DDM ties audit logs to the original user, creating clear accountability and simplifying compliance efforts. Additionally, row-level security ensures that query results are filtered based on user attributes. For example, a sales representative’s AI assistant would only access records for their region, while a manager’s assistant could retrieve data for multiple regions.

Role-Based Masking Policies

DDM uses role-based masking rules tailored to the user’s role, the type of data being accessed, and the context of the query. Role-Based Access Control (RBAC) connects user identities with enforceable permissions, ensuring that AI agents adhere to specific entitlements rather than operating under broad, unrestricted accounts. For example, a policy enforcement point can modify AI-generated queries in real time - adding clauses like WHERE region = {user.region} - to automatically filter results based on the authenticated user’s attributes. This dynamic adjustment removes the need for separate database views or hardcoded filters.

Maintaining Data Relationships for AI

Effective data masking goes beyond simple redaction - it ensures that the structure and relationships within the data remain intact for AI analysis. Format preservation keeps masked values consistent with the original layout, preventing syntax errors or logic issues during AI processing.

Referential integrity is another critical factor. Primary and foreign keys must be masked consistently across all tables, allowing AI systems to perform accurate joins and relational analysis without exposing sensitive details. Deterministic algorithms ensure that identical input values are masked the same way every time, enabling AI to track unique entities across queries without revealing their true identities. Additionally, semantic integrity ensures that masked values remain logical and realistic - for example, a masked salary would stay within plausible ranges, or a masked age would fall between 0 and 120. This attention to detail ensures that AI-generated insights remain accurate and relevant for business needs.

How to Implement Dynamic Data Masking

To set up Dynamic Data Masking (DDM), you need to locate sensitive data, establish masking rules, and maintain continuous oversight to ensure everything runs smoothly.

Step 1: Identify and Classify Sensitive Data

Start by mapping all data sources. This means listing every table, view, and external system that supports your AI projects. By doing this, you’ll get a clear understanding of where sensitive information resides across your infrastructure.

Next, use metadata tags to label sensitive columns. For example, columns containing Social Security numbers could be tagged as "PII-SSN." This lets you apply a single masking rule to similar columns across multiple databases, saving time and ensuring consistency.

Step 2: Define Masking Policies

After identifying sensitive data, the next step is to decide how to mask it. Set up masking rules based on your security requirements, user roles, and compliance standards. For example, deterministic masking ensures that the same input value is masked consistently across all tables. If "12345" is masked as "ABC789" in one location, it will appear as "ABC789" everywhere else, which is crucial for maintaining data correlations in machine learning models.

You can also create context-aware policies that adjust masking levels based on specific conditions like user roles or geographic location. For instance, a marketing analyst might see partially masked email addresses (e.g., "j***@example.com"), while a compliance officer gets full visibility, and an AI tool only processes domain names. For cases where the original data structure must remain intact, use format-preserving encryption (FPE).

Step 3: Deploy a Proxy or Middleware Layer

Implement a proxy or middleware layer to handle real-time data masking. This layer sits between your AI systems and the databases, intercepting queries and applying masking rules before sending results back. DreamFactory provides this middleware layer by generating governed REST APIs for any data source with role-based access control, identity passthrough, and field-level security built in. With support for over 30 connectors, the platform allows you to implement DDM without direct database access, ensuring that AI systems only work with masked data while maintaining performance.

Step 4: Integrate with Identity Providers

Tie your DDM setup to existing authentication systems like OAuth, LDAP, or SSO. This ensures that masking policies are enforced based on the identity of the user making the request. Identity passthrough links every query to a specific user, adding a layer of accountability that’s essential for compliance and audit purposes. Once integrated, run tests to confirm that the policies are effectively securing access to sensitive data.

Step 5: Test, Monitor, and Optimize

Before deploying, test your masking policies thoroughly. Simulate queries from different user roles to confirm that the rules work as intended and that AI systems still receive data they can use. Keep an eye on system performance to avoid latency issues, and refine your policies as your data environment changes. Use request tracking and alert systems to spot potential violations quickly and ensure traceability.

Best Practices for Dynamic Data Masking in Enterprises

Use DDM with Enterprise Data Access Platforms

Integrating Dynamic Data Masking (DDM) with a centralized data access platform can streamline and secure your operations. DreamFactory is a secure, self-hosted enterprise data access platform that provides governed API access to any data source, connecting enterprise applications and on-prem LLMs with role-based access and identity passthrough. Instead of allowing AI systems to connect directly to your databases, you route their queries through governed REST API endpoints. This layer applies masking rules, carries user identity, and logs every request.

Identity passthrough ensures that the authenticated user's credentials - whether via JWT, OAuth, or SAML - are passed along to the database. This means audit logs accurately reflect the identity of the user or AI agent making the request, which is critical for compliance and forensic analysis while seamlessly extending the DDM framework.

Enforce Least-Privilege Access

Following the principle of least privilege, AI systems should only access the data necessary to perform their tasks. Implement Role-Based Access Control (RBAC) at multiple levels - table, column, and row - to ensure each AI agent retrieves only the data it's authorized to see. For instance, dynamic placeholders like WHERE region = {user.region} can automatically enforce row-level filters.

To keep your masking policies current, automate data discovery and classification. As new data enters your systems, continuous scanning can identify and tag sensitive information, enabling masking rules to be applied automatically. This reduces the risk of gaps in your data protection strategy as your environment evolves.

Maintain Performance and Compliance for AI Workloads

AI queries often differ from standard API calls, especially under heavy workloads. For example, large language model (LLM) inference can take significantly longer, so increasing API timeouts to at least 300 seconds (5 minutes) is crucial to prevent premature failures. Standard 30-second timeouts may not suffice for these more complex operations.

To minimize processing overhead, pass raw JSON responses directly, avoiding unnecessary decode and re-encode steps. Striking a balance between real-time performance and regulatory requirements is essential. Test your masking policies under realistic AI workloads and monitor query performance closely. Adjust caching strategies as needed to maintain efficient response times without compromising security or compliance.

Comparison of Data Masking Techniques

Dynamic Data Masking Techniques Comparison for AI Security

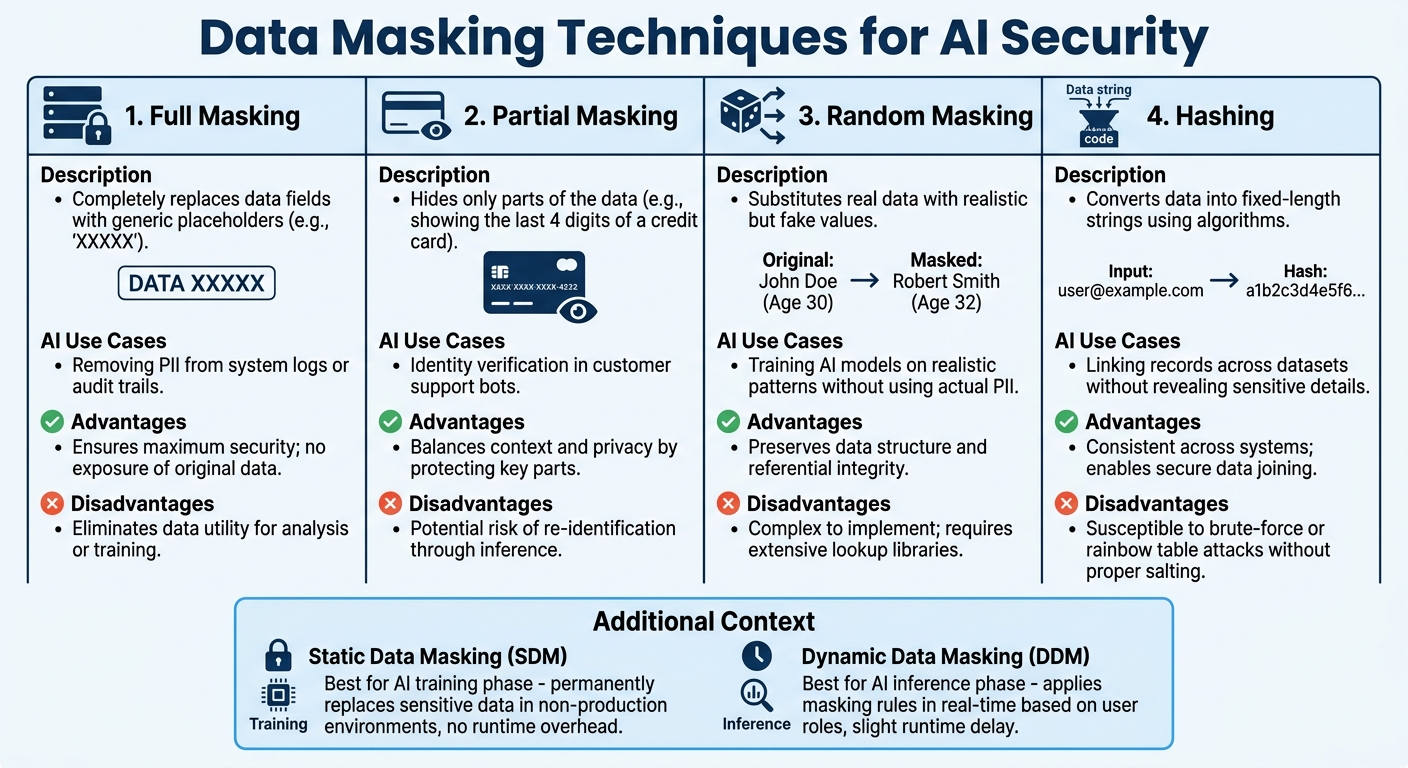

Securing AI data access means selecting the right masking approach for your needs. Static Data Masking (SDM) permanently replaces sensitive information in non-production environments, creating sanitized database copies ideal for AI training. On the other hand, Dynamic Data Masking (DDM) applies masking rules in real time, based on user roles, while leaving the underlying data intact. This makes DDM particularly useful for live AI inference and prompt handling.

Your choice of masking technique depends on the phase of your AI workflow. SDM works best during the training phase, ensuring models don’t memorize sensitive details. Meanwhile, DDM is better suited for the inference phase, where it can mask sensitive user inputs before processing by language models. It’s worth noting that SDM doesn’t add any runtime overhead, whereas DDM may introduce slight delays due to its real-time nature.

Masking Techniques Comparison Table

|

Technique |

Description |

AI Use Cases |

Advantages |

Disadvantages |

|---|---|---|---|---|

|

Full Masking |

Completely replaces data fields with generic placeholders (e.g., "XXXXX"). |

Removing PII from system logs or audit trails. |

Ensures maximum security; no exposure of original data. |

Eliminates data utility for analysis or training. |

|

Partial Masking |

Hides only parts of the data (e.g., showing the last 4 digits of a credit card). |

Identity verification in customer support bots. |

Balances context and privacy by protecting key parts. |

Potential risk of re-identification through inference. |

|

Random Masking |

Substitutes real data with realistic but fake values. |

Training AI models on realistic patterns without using actual PII. |

Preserves data structure and referential integrity. |

Complex to implement; requires extensive lookup libraries. |

|

Hashing |

Converts data into fixed-length strings using algorithms. |

Linking records across datasets without revealing sensitive details. |

Consistent across systems; enables secure data joining. |

Susceptible to brute-force or rainbow table attacks without proper salting. |

Organizations often combine SDM for training and DDM for live AI interactions to ensure comprehensive protection. Additionally, many are exploring synthetic datasets for training purposes. These datasets mimic real data structures and logic but don’t include any actual sensitive information, offering another layer of security.

As DataSunrise emphasizes:

"Masking is not just a compliance checkbox. It's a design decision that defines how safely your AI operates".

Conclusion

Dynamic data masking offers a way to secure AI data access in real time without needing to overhaul your database. By enforcing masking rules during query execution - based on who is making the request, whether it's a person or an AI system - you can protect sensitive information while still delivering the necessary insights.

To implement this effectively, start by identifying sensitive data, setting up masking policies, and deploying a middleware layer that integrates with your existing identity providers. Regularly monitoring performance ensures your system remains secure and efficient.

For enterprises, combining dynamic data masking with a governed data access platform adds an extra layer of protection, preventing AI systems from directly accessing database credentials. DreamFactory provides this by enforcing role-based access control, identity passthrough, field-level security, and full audit logging on every API endpoint. Begin with read-only access to reduce risks, and once your security measures are proven, consider expanding to transactional operations. Centralized policy management simplifies the process, allowing masking rules to cover thousands of columns without altering your database schema.

FAQs

Will DDM slow down AI queries in production?

Dynamic Data Masking (DDM) operates in real-time, intercepting and obscuring sensitive information as it's accessed. This process happens seamlessly, ensuring that AI queries run smoothly without introducing noticeable delays. When implemented and fine-tuned correctly, DDM ensures robust data security without compromising system performance.

How do you keep joins working when keys are masked?

To keep joins intact when keys are masked, you need to define specific join parameters: target table, target key, source table, and source key. This setup ensures the join operates on the original keys before they are masked, maintaining the integrity of the relationships between tables. By configuring these parameters correctly, you can safeguard sensitive data while preserving accurate and reliable data connections.

Can DDM stop sensitive data from leaking through prompt injection?

Dynamic Data Masking (DDM) is a security feature designed to shield sensitive information by disguising or hashing it in real-time as users access it. While DDM limits data exposure, it doesn't directly prevent prompt injection attacks, which are crafted to extract sensitive information through malicious prompts. To combat prompt injection, organizations need additional layers of protection. Platforms like DreamFactory address this by routing all AI queries through governed REST APIs with parameterized endpoints, role-based access control, and identity passthrough, ensuring AI systems never execute raw SQL and only access data the authenticated user is entitled to see.

Konnor Kurilla is a technical engineer with experience supporting API-driven workflows, troubleshooting backend systems, and developing documentation that empowers users to understand and utilize their technology. Blending customer-focused thinking with hands-on technical skills, he helps bridge the gap between product complexity and user clarity.

Blog

Blog